The AI video generation landscape has reached a pivotal moment in 2026. Two models now dominate the conversation among creators, marketers, and developers: Seedance 2.0 from ByteDance and Sora 2 Pro from OpenAI. Both represent the cutting edge of what's possible in AI-generated video, yet they take fundamentally different approaches to solving the same creative challenges.

This comprehensive comparison examines every dimension that matters—technical capabilities, output quality, pricing models, workflow efficiency, and real-world performance—to help you make an informed decision about which model best serves your production needs.

The Foundation: Architecture and Core Capabilities

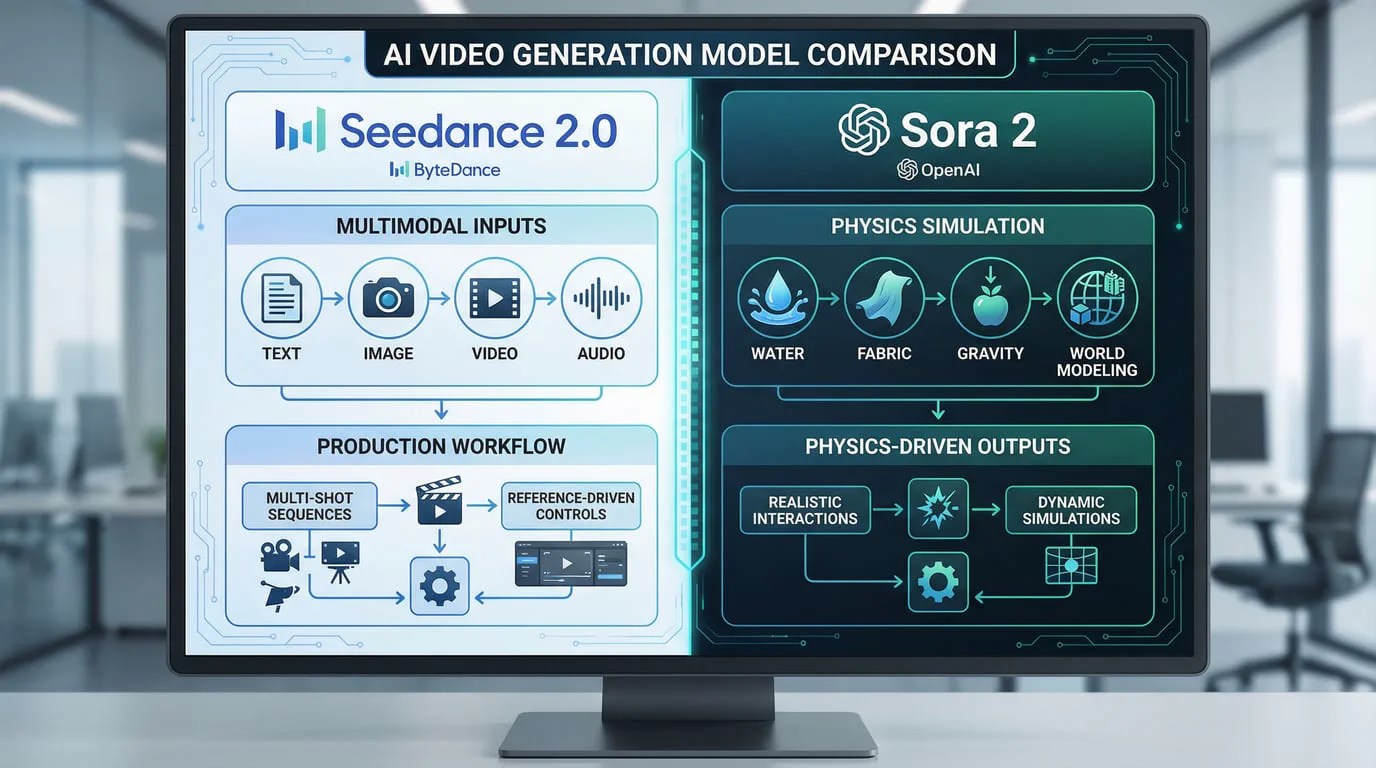

Seedance 2.0 represents ByteDance's answer to the multimodal video generation challenge. Built on a unified multimodal audio-video joint generation architecture, it supports text, image, audio, and video inputs simultaneously. This architectural decision gives Seedance 2.0 what ByteDance calls "the most comprehensive multimodal content reference and editing capabilities in the industry." The model can accept up to 12 assets in a single generation—9 images, 3 videos, and 3 audio clips—with each video or audio input supporting up to 15 seconds of content.

Sora 2 Pro, conversely, builds on OpenAI's world simulation approach. The model excels at understanding and replicating real-world physics, making it particularly strong at generating content that requires accurate physical dynamics. OpenAI describes Sora 2 Pro as capable of handling "Olympic gymnastics routines, backflips on a paddleboard that accurately model the dynamics of buoyancy and rigidity, and triple axels while a cat holds on for dear life." This physics-first approach means Sora 2 Pro generates videos with synced audio and can create richly detailed, dynamic clips from natural language or images.

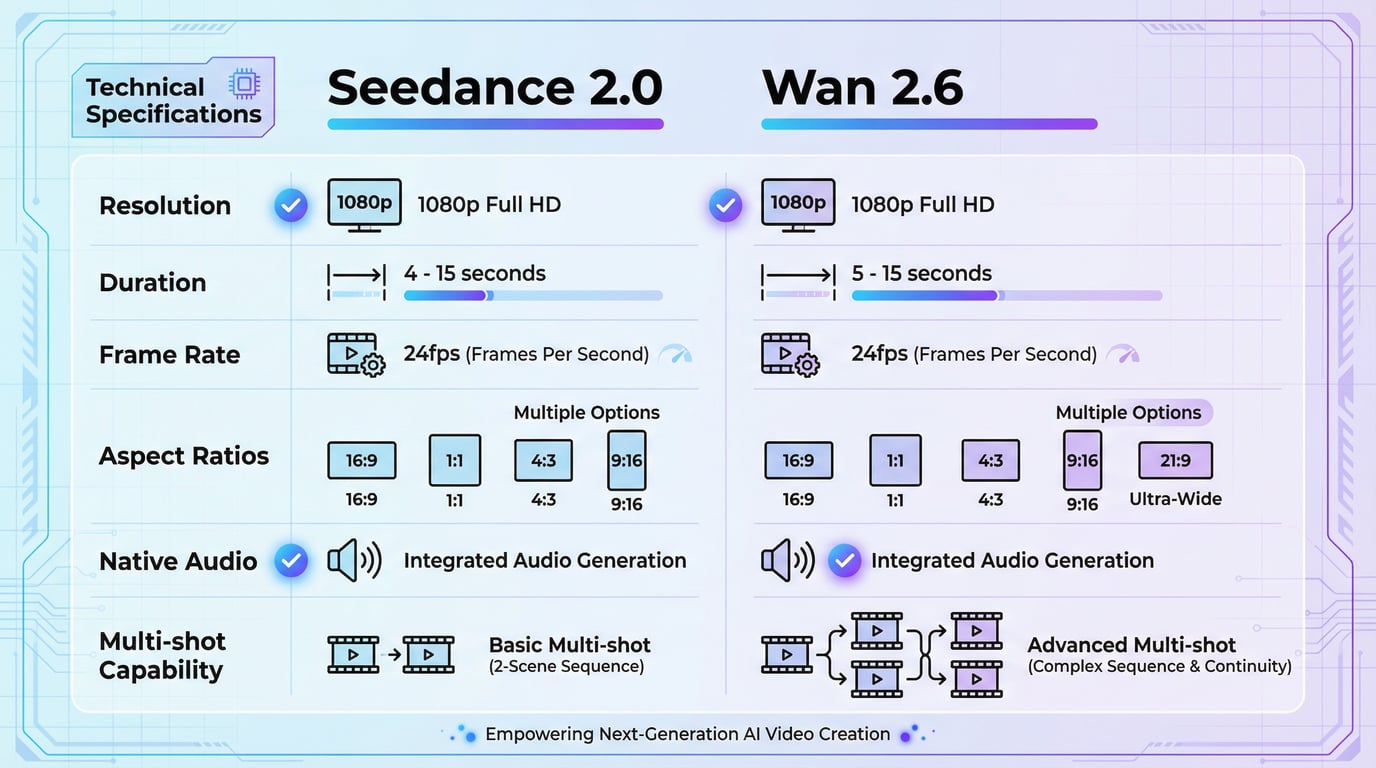

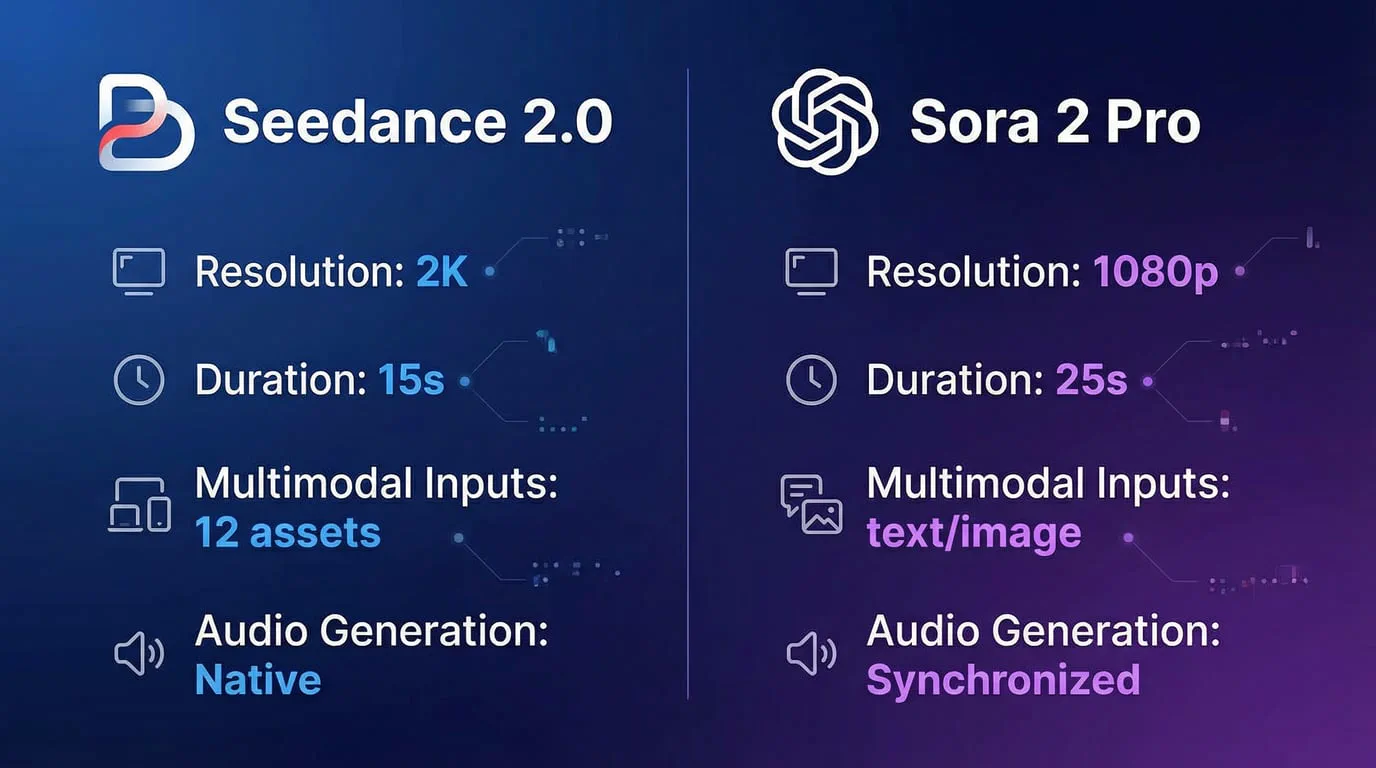

Technical Specifications: Resolution, Duration, and Output Quality

Resolution represents one of the most significant differentiators between these two models. Seedance 2.0 generates videos at native 2K resolution (2160p), supporting multiple aspect ratios including 16:9, 9:16, 4:3, 3:4, 21:9, and 1:1. This resolution advantage makes Seedance 2.0 particularly valuable for large-scale displays, high-definition advertising, and any content destined for professional production environments. The model generates videos from 4 to 15 seconds in length, with significantly improved consistency for faces, clothing, text, scenes, and visual styles.

Sora 2 Pro maxes out at 1080p resolution but compensates with longer duration capabilities. The Pro version supports up to 25 seconds of coherent generation in a single output, while the standard Sora 2 caps at 10-15 seconds. This extended duration enables complete narratives in a single generation without requiring multi-segment stitching. The model maintains visual and audio consistency across these longer narrative arcs, addressing one of the fundamental challenges in AI video generation.

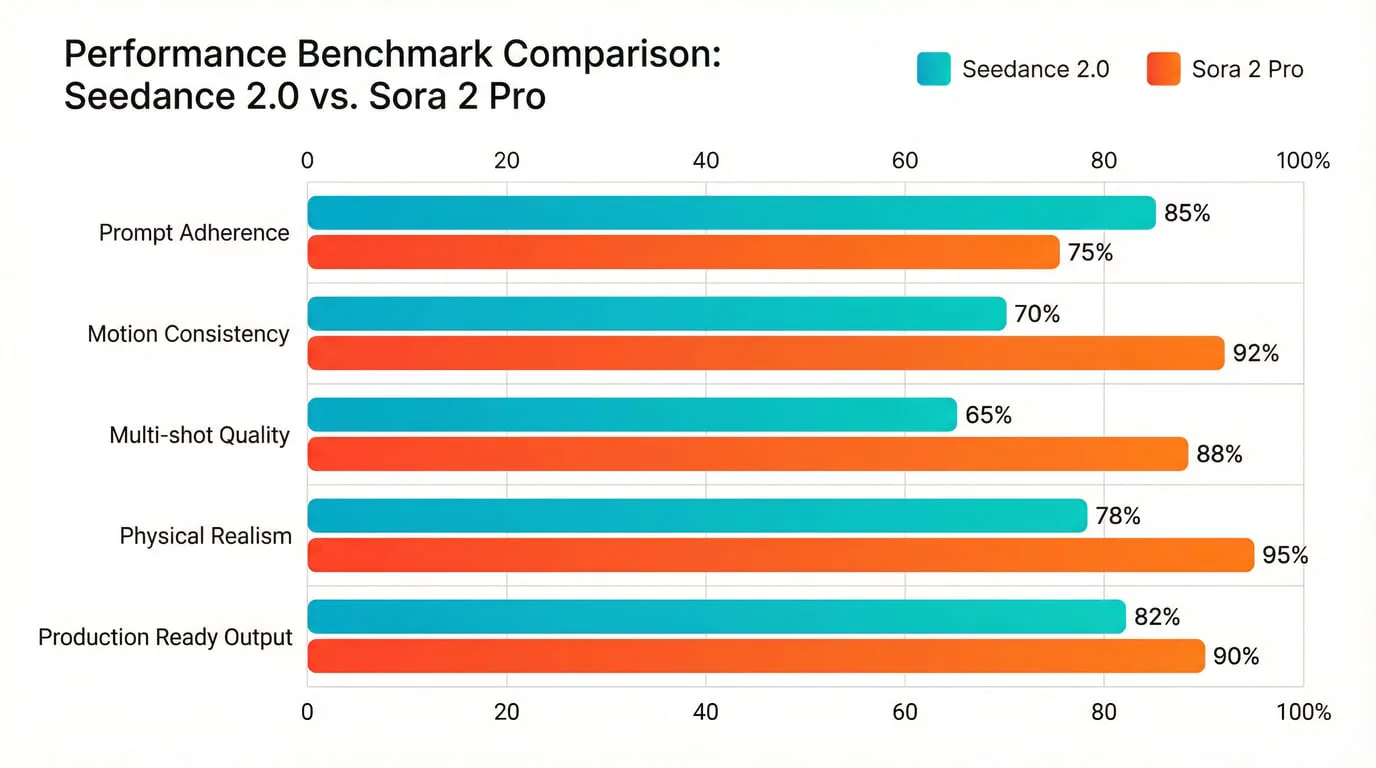

Independent testing across nine leading AI video models in early 2026 revealed nuanced performance characteristics. Sora 2 consistently ranked highest for physical realism and long-form continuity. Seedance 2.0 excelled in prompt adherence, multi-shot consistency, and production-ready output requiring minimal editing.

Multimodal Control: The Seedance 2.0 Advantage

Seedance 2.0's defining feature is its unprecedented multimodal reference system. The model doesn't just accept multiple input types—it understands how to use them together. When you provide reference videos, Seedance 2.0 can study motion logic, special effects, and character actions directly from the source material. Audio references enable the model to understand rhythm, atmosphere, and sound design, then replicate those qualities in the generated output. This capability extends to beat-matched visual transitions and phoneme-level lip-syncing, making Seedance 2.0 particularly powerful for music videos, dynamic presentations, and any content requiring tight audio-visual synchronization.

The practical implications of this multimodal approach are substantial. If you're creating branded content and need to maintain specific visual styles across multiple videos, you can feed Seedance 2.0 reference images that establish your brand aesthetic. If you're producing a series where character consistency matters, the model maintains stable character appearance across frames and shots, solving the common AI video problems of character drift and style inconsistency.

Sora 2 Pro takes a different approach. Rather than accepting multiple reference assets, it focuses on understanding natural language descriptions with exceptional depth. You can describe complex camera movements—dolly zooms, rack focuses, tracking shots, POV switches—and the model executes them accurately. The model's strength lies in its ability to simulate real-world physics and environmental interactions. Fight scenes, vehicle chases, explosions, and falling debris all behave according to realistic physical laws.

Audio Generation: Native Integration vs. Synchronized Output

Both models generate video with audio, but their approaches differ significantly. Seedance 2.0 features native audio-video joint generation through its unified architecture. The model automatically creates dialogue, ambient soundscapes, and real-time sound effects that match the visuals frame by frame. This eliminates the need for manual audio editing in post-production. The built-in audio generation has received particular praise from users, with one noting that "sound effects match the action perfectly, and the music beat sync feature is incredibly useful for dance and music content."

Sora 2 Pro generates videos with synchronized audio, meaning the audio is created to match the visual content but through a slightly different process. As a general-purpose video-audio generation system, it creates sophisticated background soundscapes, speech, and sound effects with a high degree of realism. Environmental audio integration means ambient sounds like wind, traffic, and footsteps generate contextually based on visual elements described in your prompt.

Multi-Shot Sequences and Narrative Continuity

Seedance 2.0 lets creators produce multi-shot sequences that flow naturally between camera angles and perspectives while maintaining visual continuity. This feature brings storytelling to life, making it perfect for cinematic scenes, conversations, and branded content that demand energy and engagement. The model can produce multiple shots with natural cuts and transitions within its 15-second generation window, so a single output can feel like an edited sequence rather than a single continuous clip.

A key differentiator lies in environmental consistency. Sora 2 videos can sometimes exhibit unnaturally smooth or blurry backgrounds between shots, which can break immersion. Seedance 2.0 significantly mitigates this issue, maintaining sharp background details and consistent lighting across cuts.

Sora 2 Pro's strength in multi-shot sequences comes from its extended 25-second duration capability. This longer timeframe allows for more complex narrative development within a single generation. The model maintains temporal coherence across these extended sequences, ensuring that character appearances, environmental details, and lighting remain consistent throughout.

Performance Benchmarks: Real-World Testing Results

Multiple independent evaluations have compared these models under controlled conditions. Analysis from early 2026 testing reveals that Seedance 2.0 demonstrates a 90%+ success rate in rendering complex physical motion, making it one of the most production-ready alternatives available.

Comparative testing using consistent prompts across both models shows distinct performance profiles. For straightforward generation from simple prompts, both models deliver excellent results. For maximum creative control with specific reference materials—a motion style to replicate, a rhythm to sync to, a template to follow—Seedance 2.0's multimodal reference system proves unmatched. For physical realism in scenarios involving complex dynamics and environmental interactions, Sora 2 remains the benchmark.

One analysis noted that "Sora remains impressive—especially in large-scale scene understanding—but Seedance closes the cinematic gap while surpassing it in controllability and stability." The assessment concluded that ByteDance didn't just catch up but optimized for creators, and in 2026, that's what wins.

Pricing and Accessibility: Cost-Efficiency Analysis

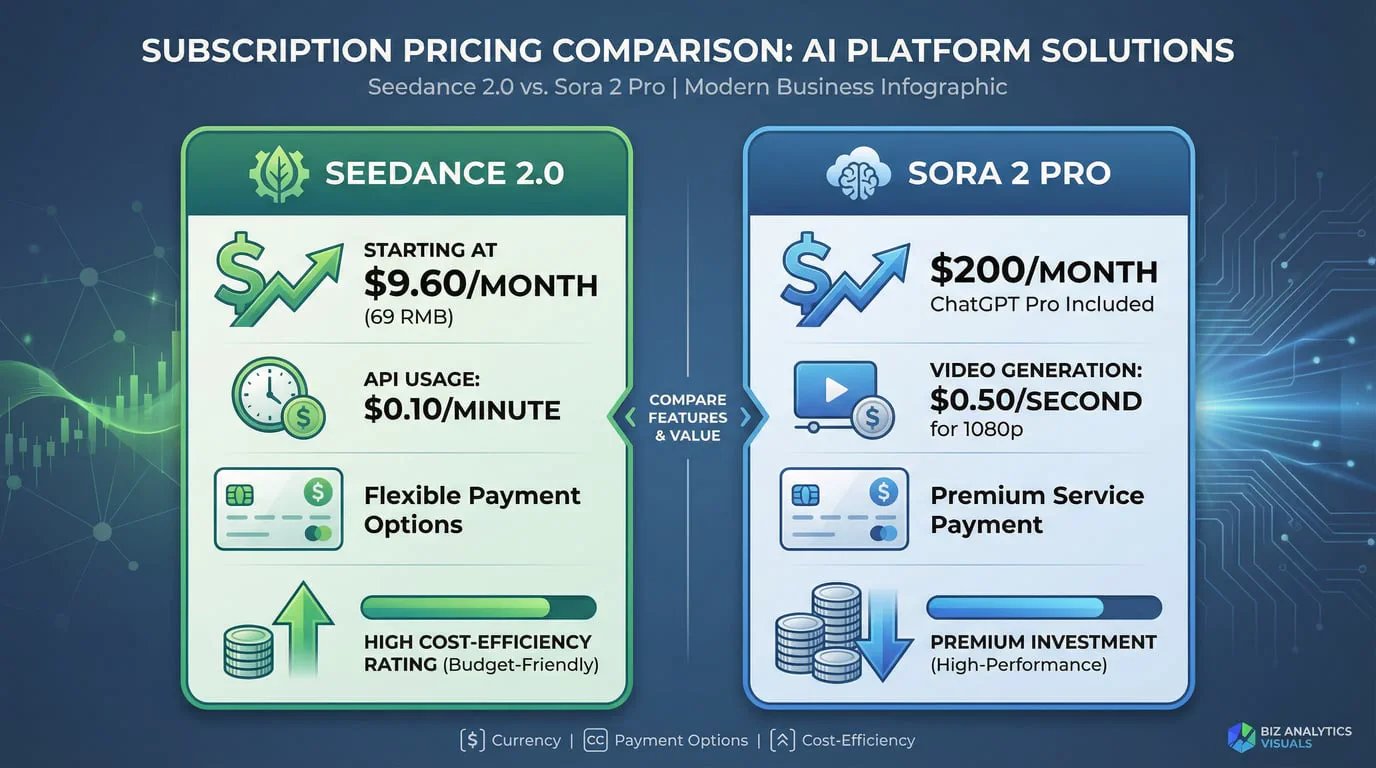

Pricing structures for these models differ dramatically, reflecting their different market positioning and access strategies. Seedance 2.0 offers multiple access pathways. Through ByteDance's Jimeng (Dreamina) platform, premium memberships start at approximately 69 RMB ($9.60 USD) per month. The Xiaoyunque App currently offers a limited-time free trial phase, and the Doubao App and Web interface provides a daily allowance of free video generations for casual creators.

For API access, Seedance 2.0 follows a pay-as-you-go model starting at approximately $0.10 per minute of generated video. This pricing structure makes it particularly cost-effective for production workflows. One analysis calculated that for a 90-minute project at traditional success rates, you might spend over $100 on failed generations with other models. With Seedance 2.0's high success rate, the same project comes in around $20—an effective 80% reduction in production costs.

Sora 2 Pro requires a ChatGPT Pro subscription at $200 per month. This subscription provides access to Sora 2 Pro with 10,000 credits monthly. ChatGPT Plus users ($20/month) get limited Sora 2 access with 1,000 credits monthly, but this tier caps out at 720p resolution and 10-second videos with watermarks. The Pro tier unlocks 1080p resolution and removes watermarks, making it the minimum viable option for professional work.

For API access, Sora 2 Pro costs $0.50 per second for 1080p output. This pricing means a 25-second video costs $12.50 to generate, compared to Seedance 2.0's cost of approximately $0.25 for a 15-second video.

Workflow Integration and Platform Accessibility

Access patterns for these models reflect different distribution strategies. Seedance 2.0 is available through BytePlus (ByteDance's enterprise platform) and third-party providers including WaveSpeedAI, Replicate, and Atlas Cloud. This multi-platform availability gives developers flexibility in how they integrate the model into their applications.

Geographic restrictions apply differently to each model. Seedance 2.0 initially launched with primary availability in China through the Jimeng platform, with international access gradually expanding through third-party API providers. Sora 2 Pro initially limited availability to specific countries, with users outside supported regions requiring VPN access or third-party platform alternatives.

An emerging trend in 2026 is the rise of multi-model platforms that provide unified access to multiple AI video generation models through single interfaces. These platforms offer users access to both Seedance 2.0 and Sora 2 Pro alongside other leading video generation models, plus image generation capabilities using various cutting-edge models. This approach eliminates the need to maintain separate subscriptions and learn different interfaces for each model.

Use Case Optimization: When to Choose Each Model

The optimal model choice depends heavily on your specific workflow requirements and production goals. Seedance 2.0 excels in scenarios requiring template-based work, content remixing, and tight audio-visual synchronization. When you need to produce multiple variations of marketing content quickly, maintain character consistency across scenes, or generate videos that require minimal post-production editing, Seedance 2.0 delivers exactly that workflow optimization. The multimodal reference system makes it ideal for branded content where maintaining specific visual styles across multiple outputs is critical.

The model's native audio generation and beat-sync capabilities make it particularly powerful for music videos, dance content, and any scenario where rhythm and timing matter. One user noted, "I reference complex action sequences from films and Seedance 2.0 replicates them with my own characters. The motion precision is unlike anything I've seen in AI video."

Sora 2 Pro represents the best choice when physical realism and world simulation matter most. For scenarios involving complex physics—vehicle dynamics, water simulations, realistic character movements in challenging environments—Sora 2 Pro's physics-first approach delivers superior results. The extended 25-second duration makes it ideal for longer narrative sequences that need to maintain coherence across multiple story beats within a single generation.

For straightforward text-to-video generation where you're describing a scene without providing reference materials, both models perform excellently. The choice then comes down to whether you prioritize resolution (Seedance 2.0's 2K output) or duration (Sora 2 Pro's 25-second maximum).

Production Workflow Considerations

Real-world production workflows often involve multiple stages: ideation, generation, review, iteration, and finalization. Seedance 2.0's high success rate (90%+) means fewer wasted generations and faster iteration cycles. The ability to provide reference materials upfront reduces the number of generation attempts needed to achieve your desired result. When you can show the model exactly what motion, style, or atmosphere you want rather than describing it in text, you eliminate much of the ambiguity that leads to unsatisfactory outputs.

The natural language control in Seedance 2.0 has been praised for its intuitiveness. One user reported, "I just describe what I want to reference and how, and the model understands perfectly." This ease of use reduces the learning curve and allows creators to focus on creative decisions rather than prompt engineering.

Sora 2 Pro's workflow centers on detailed prompt engineering. The model excels at following complex, specific instructions, but achieving optimal results requires understanding how to structure prompts effectively. Camera angles need to be specified explicitly—"close-up of hands" or "wide aerial view"—to avoid random framing. The model's strength in understanding cinematic language means that creators with film production backgrounds can leverage familiar terminology to achieve precise results.

API Integration and Developer Experience

For developers building AI video generation into their products, both models offer capable APIs with workable pricing structures, though neither has reached full enterprise infrastructure maturity. The landscape shifted rapidly through 2025 and early 2026, with Seedance 2.0's launch, Sora's troubled initial rollout and subsequent stabilization, and ongoing API expansions all happening within months of each other.

Seedance 2.0's API through Volcengine provides programmatic access to the full multimodal generation capabilities. Developers can pass multiple asset types in a single API call, with the model automatically understanding each input's role and maintaining consistency across all provided references. The approximately $0.10 per minute pricing makes it cost-effective for applications requiring high-volume generation.

Sora 2 Pro's API access has gradually expanded following the initial consumer release. The API pricing of $0.50 per second for 1080p output positions it as a premium option. For applications where physical realism and extended duration are critical requirements, this premium pricing may be justified by the superior output quality in those specific dimensions.

Comparative Analysis: Key Differentiators

| Feature | Seedance 2.0 | Sora 2 Pro |

|---|---|---|

| Maximum Resolution | 2K (2160p) | 1080p |

| Video Duration | 4-15 seconds | Up to 25 seconds |

| Multimodal Inputs | Text, 9 images, 3 videos, 3 audio clips | Text, images |

| Audio Generation | Native joint audio-video | Synchronized audio |

| Aspect Ratios | 16:9, 9:16, 4:3, 3:4, 21:9, 1:1 | Standard formats |

| Subscription Cost | From $9.60/month | $200/month (ChatGPT Pro) |

| API Pricing | ~$0.10/minute | $0.50/second (1080p) |

| Success Rate | 90%+ reported | High (specific rate not disclosed) |

| Primary Strength | Multimodal control, consistency | Physical realism, duration |

| Best For | Template-based work, brand consistency | Physics simulation, long narratives |

The Unified Platform Advantage

Rather than choosing between these models, many production teams now use multi-model platforms that provide access to both Seedance 2.0 and Sora 2 Pro through a single interface. This approach offers several advantages: you can select the optimal model for each specific task without maintaining separate subscriptions, compare outputs from different models side-by-side, and switch between models as your project requirements change.

Platforms offering unified access to multiple AI video and image generation models eliminate the friction of managing multiple accounts, learning different interfaces, and tracking separate credit systems. For teams producing diverse content types, this flexibility proves invaluable. You might use Seedance 2.0 for branded social media content where consistency and quick turnaround matter, then switch to Sora 2 Pro for hero videos where physical realism and extended duration justify the higher per-video cost.

Accessing Advanced AI Video Generation

For creators and businesses looking to leverage these cutting-edge models, we provide convenient access to both Seedance 2.0 and Sora 2 Pro alongside other leading video generation models. Our platform also includes access to multiple advanced image generation models including Flux, Stable Diffusion, DALL-E 3, and others, creating a comprehensive suite for all your AI content generation needs.

Explore Seedance 2.0: https://seadanceai.com/seedance-2

Explore Sora 2 Pro: https://seadanceai.com/sora-2

This unified approach eliminates the complexity of managing multiple subscriptions and platforms while giving you the flexibility to choose the right model for each project. Whether you need Seedance 2.0's multimodal control for branded content or Sora 2 Pro's physical realism for cinematic sequences, you can access both through a single, streamlined interface.

Future Trajectory and Model Evolution

The AI video generation landscape continues evolving rapidly. Both ByteDance and OpenAI are actively iterating on their models, with improvements in generation speed, output quality, and feature sets arriving regularly. The competitive pressure between these leading models drives innovation that benefits all users.

By late 2026, industry observers expect the generation-review-iterate cycle to shrink to seconds rather than minutes. This transformation will change AI video from a production tool into a creative instrument—something you play rather than something you operate.

The convergence of capabilities means that both models will likely address their current limitations. Seedance 2.0 may extend its maximum duration, while Sora 2 Pro could add more sophisticated multimodal input handling. The gap between models narrows as each incorporates the other's strengths.

Making Your Decision

Choosing between Seedance 2.0 and Sora 2 Pro ultimately depends on your specific production requirements, budget constraints, and workflow preferences. Consider these decision factors:

Choose Seedance 2.0 when you need:

-

Higher resolution output (2K) for professional displays or advertising

-

Multimodal reference capabilities with specific style, motion, or audio templates

-

Cost-effective production at scale with high success rates

-

Native audio-video synchronization with beat-matching capabilities

-

Multiple variations of branded content with consistent visual identity

-

Rapid iteration with minimal post-production editing

Choose Sora 2 Pro when you need:

-

Extended duration (up to 25 seconds) for complete narrative sequences

-

Superior physical realism for complex dynamics and environmental interactions

-

Longer-form storytelling within single generations

-

Integration with existing ChatGPT Pro workflows

-

Maximum quality for scenarios involving realistic physics simulation

Consider a multi-model platform when you need:

-

Flexibility to choose the optimal model for each specific project

-

Access to both models without maintaining separate subscriptions

-

Ability to compare outputs side-by-side before committing to final renders

-

Comprehensive toolset including both video and image generation capabilities

Conclusion: Two Paths to Excellence

Seedance 2.0 and Sora 2 Pro represent two distinct philosophies in AI video generation. Seedance 2.0 optimizes for creator control, offering unprecedented multimodal input capabilities that let you show the model exactly what you want rather than describing it. This approach, combined with native 2K resolution and cost-effective pricing, makes it ideal for production workflows requiring consistency, efficiency, and creative control.

Sora 2 Pro prioritizes physical realism and world simulation, excelling at scenarios where accurate physics and extended narrative duration matter most. Its 25-second maximum duration and superior handling of complex dynamics make it the benchmark for cinematic realism.

Neither model is universally superior—each excels in different dimensions. The best choice depends on your specific use case, production requirements, and budget constraints. For many creators and production teams, the optimal solution involves having access to both models through a unified platform, allowing you to select the right tool for each specific task.

As AI video generation continues its rapid evolution, both models will improve and expand their capabilities. The competition between these leading approaches drives innovation that benefits the entire creative community. Whether you choose Seedance 2.0's multimodal control or Sora 2 Pro's physical realism—or leverage both through a multi-model platform—you're working with the most advanced AI video generation technology available in 2026.

The future of video production is here, and it's more accessible, more powerful, and more creative than ever before.